Key takeaways

General-purpose AI models misidentify medications, skip patient authentication steps, and route worsening symptoms to scheduling queues. The risks of AI in healthcare are most visible in the contact center, where clinical errors, HIPAA violations, and patient safety failures follow directly from these gaps.

AI trained on generic conversation data misinterprets how patients describe their conditions. A misunderstood medication name or symptom description carries clinical consequences that do not exist in retail or financial services.

AI agents in healthcare require deterministic guardrails for controlled substance identification, worsening symptom escalation, and failed authentication. Probabilistic language models cannot guarantee the correct action on every high-risk interaction.

A purpose built AI voice agent in healthcare integrates bidirectionally with the EHR: reading medication lists and appointment history, then writing back case dispositions, telephone encounters, and call summaries. Calendar-only integrations leave manual documentation work for human agents.

Evaluate any AI voice agent in healthcare by walking through a controlled substance refill and a failed authentication flow. The escalation logic, audit trail, and EHR write-back behavior will separate healthcare-grade platforms from general-purpose AI sold into the vertical.

Introduction

AI pilots usually start well. Bots confirm appointments, schedule follow-ups, and handle routine queries without issue. Then they go live on clinical workflows.

General-purpose AI does not carry the clinical logic to distinguish a controlled substance from a standard refill, a worsening symptom from a routine complaint, or a partial authentication from a completed one. A patient calling about a controlled substance refill gets incorrect guidance because the bot misread how they described the medication. A patient reporting chest pain gets routed to the general scheduling queue. A patient who provides a date of birth but no phone number gets through without completing verification.

The consequences of these predictable failure scenarios are specific: incorrect clinical guidance, HIPAA exposure, and trust erosion that compounds over time.

Healthcare contact centers operate under clinical, compliance, and safety requirements that general-purpose AI was never designed to meet.

What Healthcare Requires from AI

Healthcare contact centers operate under four categories of clinical and compliance requirements that determine whether an AI deployment succeeds or fails

Clinical escalation logic: Controlled substances must be identified and flagged on every interaction. Worsening symptoms must trigger escalation to a human agent. Complex care coordination must route to a clinician. A general-purpose language model does not distinguish between these scenarios because the training data and rule sets were not designed for them.

EHR integration depth: A virtual agent that only checks appointment availability solves one workflow. Clinical workflows require the agent to read medication lists, query insurance status, and write back case dispositions, telephone encounters, and call summaries to the EHR. Read-only integration leaves manual documentation work for human agents, bidirectional integration resolves the patient’s request in the system of record.The difference between read-only and bidirectional integration is the difference between a lookup tool and a workflow that resolves the patient’s request in the system of record.

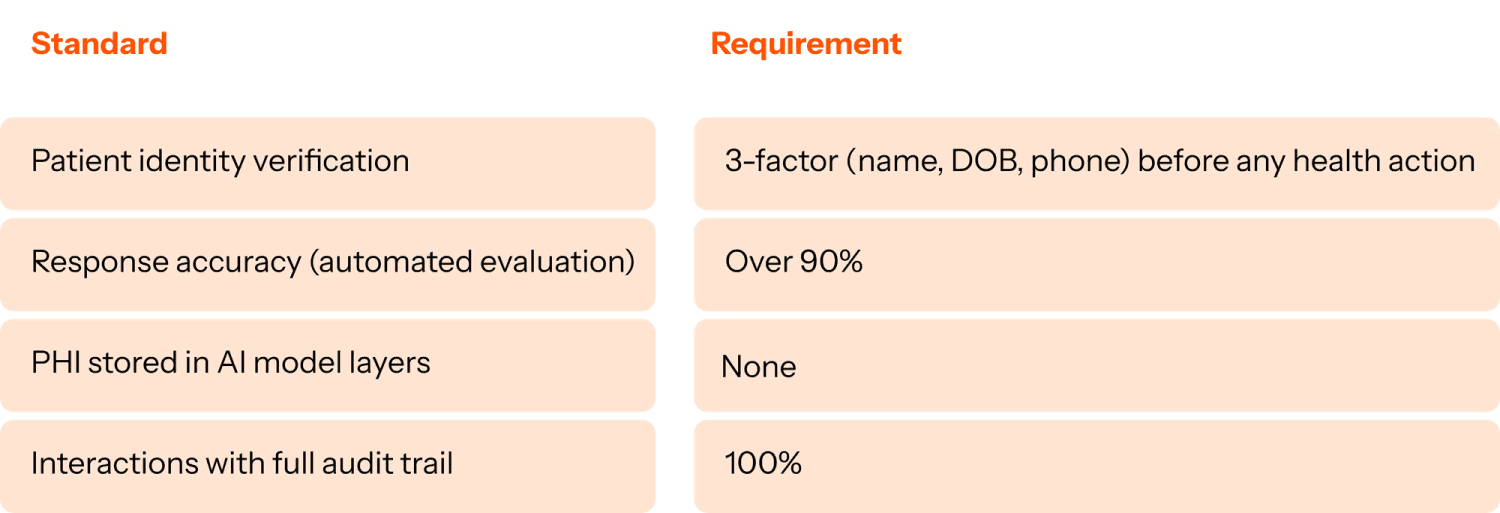

HIPAA compliance at the interaction level: Three requirements apply to every interaction: real-time PII masking during the conversation, zero storage of PHI in AI model layers, and three-factor patient identity verification (name, date of birth, and phone number) before any health-related action. A full audit trail must exist for every interaction so compliance teams can review exactly what the AI virtual agent did and why.

Medical terminology at scale: Patients do not describe conditions in clinical terminology. They say “my refill went to the wrong place” or “it hurts worse than last time.” AI agents in healthcare trained on generic customer service data can misinterpret the clinical significance of these descriptions which can change the triage outcome

A purpose built AI agent for healthcare should meet the following standards:

What healthcare-grade architecture looks like

Three architectural decisions separate healthcare-grade platforms from general-purpose AI adapted for the vertical.

Training data: real patient interactions: A healthcare-grade VA trains on real patient-provider conversations, the same interaction data that experienced agents learn from. The training corpus includes clinical language, specialty-specific workflows, and the full variability of how patients describe symptoms, medications, and care needs. When a patient says “my refill went to the wrong place,” the VA maps that statement to the correct system, medication record, and next action.

Deterministic scenario engines for high-risk interactions: Language models are probabilistic. For concerns like controlled substance identification, symptom escalation, and patient authentication, probabilistic (i.e., most likely) output creates clinical and compliance exposure. A deterministic scenario engine follows exact business rules for these interactions. The escalation path fires every time, with a 100% auditable trail.

Bidirectional EHR integration that fully resolves patient requests: A read-only EHR connection lets the VA personalize responses using the patient’s medication list, appointment history, and insurance status. A write-back connection completes the workflow: the VA creates telephone encounter records, updates case dispositions, and syncs call summaries to the system of record. Human agents do not perform manual documentation after the interaction.

How to evaluate a healthcare VA platform

The healthcare contact center automation market has expanded. Platforms range from scheduling-focused tools that handle the front end of patient communication to general-purpose AI with broad functionality and no clinical guardrails.

Before committing to a platform, walk through a controlled substance refill flow end to end. Ask to see the escalation logic for worsening symptoms. Ask how the system handles a patient who fails authentication. Ask what data is written back to the EHR after each interaction. Ask what the audit trail looks like and who can access it.

The answers to those questions will reveal whether the platform was built around healthcare constraints or adapted from a different industry.

Explore what healthcare-grade means in practice →

This is part two of The Patient-First Contact Center, a four-part series for healthcare contact center leaders navigating AI.

Part 1: What healthcare contact centers get wrong about staffing (and what it’s costing them)

Part 3: Why siloed automation at healthcare contact centers fails at patient care

Part 4: Why AI deployment in healthcare takes 6-12 months and how to fix it

FAQ section

Why do generic AI chatbots fail in healthcare contact centers?

Generic virtual agents fail in healthcare because they cannot handle the specific requirements that healthcare contact centers operate under: multi-step clinical workflows, bidirectional EHR read/write integration, controlled substance escalation logic, HIPAA-compliant authentication, and the variability of medical terminology in real patient conversations. General-purpose AI systems lack the architecture to meet these requirements. They require purpose-built architecture from the ground up.

What is a deterministic scenario engine in healthcare AI?

A deterministic scenario engine is an architectural approach that uses hard-coded, auditable rules to govern high-stakes interactions in a healthcare virtual agent. Instead of relying on a probabilistic language model to decide how to handle a controlled substance refill request or a failed patient authentication, the system follows a defined, unchangeable rule every time. This gives compliance teams a 100% auditable trail and eliminates the hallucination risk that pure LLM-based systems carry in clinical environments.

What does bidirectional EHR integration mean for a virtual agent?

Bidirectional EHR integration means the virtual agent can both read from and write to the electronic health record. Reading allows the VA to personalize responses based on the patient’s actual data: medication lists, appointment history, insurance status. Writing back means the VA can create telephony encounters, update case dispositions, and sync call summaries directly into the system of record, so human agents do not need to perform manual documentation after every interaction.

What are the HIPAA requirements for a healthcare virtual agent?

A HIPAA-compliant healthcare virtual agent must include real-time PII masking so sensitive patient data is redacted during interactions, no storage of protected health information (PHI) in AI model layers, multi-factor patient identity verification (typically name, date of birth, and phone number) before any health-related action is taken, and a full audit trail on every interaction so compliance teams can review exactly what the VA did and why. These requirements apply to both voice and digital channel interactions.

How is a purpose-built healthcare virtual agent different from a general-purpose chatbot?

A purpose-built healthcare virtual agent is trained on real patient-provider interaction data rather than generic conversation data. It integrates bidirectionally with EHR and contact center platforms rather than sitting on top of them. It uses deterministic rules for clinical escalation scenarios rather than relying on probabilistic AI judgment. And it is designed from the ground up to handle HIPAA requirements at the interaction level, not as an add-on compliance layer. A general-purpose chatbot adapted for healthcare carries the constraints of its original architecture. Clinical escalation logic, deterministic rules, and bidirectional EHR integration require purpose-built design.

What questions should healthcare leaders ask when evaluating a virtual agent platform?

The most revealing evaluation questions are operational and specific. Ask what happens when a patient calls about a controlled substance refill: walk through the exact escalation flow. Ask how the system handles a patient who fails authentication. Ask what data is written back to the EHR after each interaction. Ask what the audit trail looks like and who can access it. Ask whether the VA uses deterministic rules or probabilistic AI for high-stakes clinical scenarios. The answers reveal whether the platform was genuinely built for healthcare or built for a different industry and sold into it.