Why Siloed Automation at Healthcare Contact Centers fails at Patient Care

Key Takeaways

1. Most healthcare contact center teams run AI and human agents as separate systems, which creates inefficiencies instead of savings. When the healthcare chatbot and agents don’t share data or learn from each other, it increases operational costs.

2. There is a context gap during handoffs from a healthcare virtual assistant to a human agent, where patients have to repeat information. This breaks the experience and reduces the impact of contact center automation.

3. AI interactions from a healthcare chatbot are rarely evaluated like human calls, creating a quality gap. Without proper QA in the healthcare contact center, it is hard to measure or improve performance.

4. Human agents solve complex cases, but that knowledge is not fed back into the healthcare virtual assistant. This intelligence gap limits the long-term value of contact center automation.

5. A unified healthcare contact center connects the healthcare chatbot, human agents, and QA systems into one loop. This improves patient experience and makes contact center automation more effective.

Introduction

Healthcare is inherently personal, and CX leaders are now leveraging automation as one of the engines to deliver that human touch at scale. By deploying intelligent virtual assistants to handle symptom triage to appointment scheduling, organizations intend to handle the surge in patient inquiries using intelligent virtual agents. While these systems are a success on paper, the patient often feels repetition fatigue.

The legacy healthcare chatbot handles inbound inquiries until it cannot, then transfers to an AI virtual agent or human agent who starts from zero. And, under the hood, when the virtual assistant resolves something incorrectly, there is no signal back to the training pipeline for your care team. Or, when a human agent develops a better way to handle a complex case, that intelligence never reaches the bot.

The result is an operation that is more expensive and more fragile than if the AI were never deployed because the AI is siloed. As per the recent Deloitte report, healthcare providers could lose as much as $54.5 billion over the next decade by failing to offer integrated virtual care.

One healthcare contact center leader described the vision clearly during an implementation review: the goal was to have a virtual agent answer the call, complete patient verification, open a case, summarize what the patient was calling about, and mark it as a telephone encounter without a human intermediary involved at any stage. The gap between that vision and what most deployed systems actually deliver is where the hidden cost lives.

The three gaps that fragmented AI creates

When virtual and human agents operate as siloed, disconnected systems, three specific and measurable gaps emerge. Each one compounds the others, directly impacting your patient experience.

Gap 1: The Context Gap at Handoff

This is the most visible point of failure for the patient. It happens at the moment when the conversation is handed-off to care teams from the automation layer. Realistically, when an AI virtual agent transfers to a human agent, the patient should never have to repeat themselves. When Virtual Assistant collects symptoms, policy numbers, or appointment preferences, identity, reason for call, attempted resolution, that information lives in its own system and does not travel with the call. The human agent starts cold, the patient is frustrated, and the efficiency gains of the AI virtual agent are partially wiped out in the first 60 seconds of the human interaction.

A warm handoff means the agent receives, in real time: a full call summary, the patient sentiment score, identity verification status, and what the AI virtual agent already tried. The patient picks up exactly where they left off. This is not a feature. It is the baseline requirement for running humans and AI as a single CX operation.

Gap 2: The Quality Gap

QA in healthcare contact centers typically covers a fraction of human calls and essentially none of the bot interactions. The AI virtual agent (VA) is a black box from a quality perspective. You know it resolved or escalated, but not whether it resolved correctly, or whether it communicated clearly, or whether it followed your protocols. Meanwhile, your human agents are being evaluated against carefully designed quality rubrics.

Unified and wholistic QA means the same quality rubrics that grade your human agents apply to every VA interaction. You can see where the VA underperforms relative to your best agents, and that data becomes the training signal for continuous improvement.

Gap 3: The Intelligence Gap

This is the most expensive gap of all because it prevents your system from getting smarter. Every time a human agent successfully resolves an edge case the VA could not handle, that resolution represents an opportunity to teach the VA. In a fragmented system, that learning is lost. The agent moves on to the next call while the bot continues failing the same edge case.

A continuous improvement loop means the VA is continuously retrained on the best human-workflows. When your best agent handles a novel prescription scenario particularly well, that interaction becomes a training signal. The VA gets better from the intelligence that already exists in your operation, without requiring separate AI projects or model retraining cycles.

Beyond the daily frustrations, the true cost of fragmented intelligence is felt in the long-term stability of the health system.

- Lower patient loyalty due to the lack of personal touch, leading to a loss in long-term patient value.

- Higher operational costs as the care team spends time in re-verifying information, and wasted spend on automation as the AI doesn't improve with time.

- Compliance and accuracy risks as manually moving data between a bot transcript and a patient record creates a window for human error.

Bridging the Gap with Unified Intelligence

To fix fragmented care, we need to move away from isolated tools and toward a system where the virtual agent and the human agent share the same brain.This isn't about adding more tools; it’s about ensuring that all your CX automation exists within a single core, all the time.

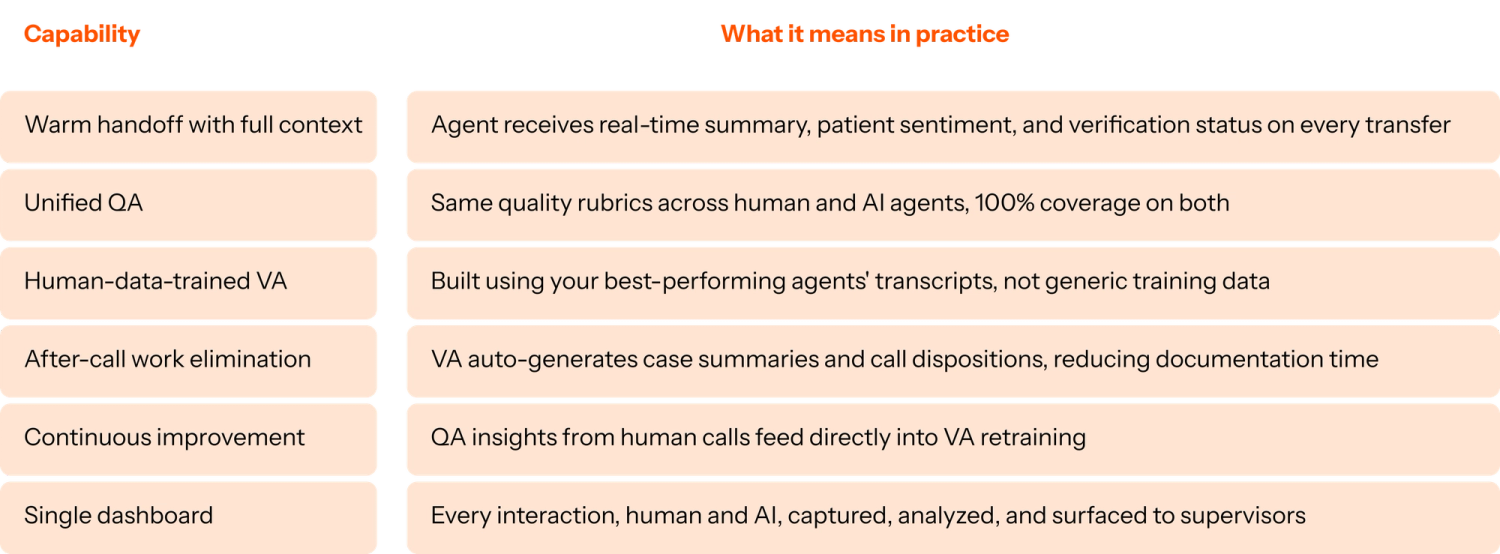

Level AI enables top global CX leaders bridge these gaps by seamlessly unifying CX to deliver a superior patient experience with:

- Real-Time Context Transfer: Our platform ensures that every bit of data collected by the virtual agent, including patient intent, sentiment, and verified history, is delivered to the agent's desktop before the call with care teams even begins.

- Bidirectional Learning Loops: Unlike siloed bots, Level AI creates a feedback system where human expertise directly improves AI performance. When a huamn agent successfully navigates a complex clinical triage, that logic is captured to sharpen the VA’s future responses.

- Proactive Agent Assistance: While the human agent is speaking, Level AI works in the background to surface relevant clinical guidelines and insurance policies, ensuring the agent has the intelligence they need to provide accurate care without searching through multiple tabs.

- End-to-end Patient Journey Mapping: From the first "hello" to the VA to the final resolution with the human agent. Level AI empowers CX teams to see if the bot’s triage actually helped the agent or if it just created more confusion.

What Unified Human and AI Operations look like in Practice

The growth of the Healthcare virtual assistant market means it is now straightforward to deploy scheduling automation. What is much rarer is a platform that connects scheduling automation to the human agents who handle everything else, and then connects that whole operation to a quality and intelligence layer that makes both sides better over time.

That is the difference between deploying a Healthcare Virtual Assistant and transforming your contact center. There is a measurable change in how clinical and non-clinical staff spend their time, and a measurable improvement in the patient experience that your leadership team can actually report on.

Wrapping Up

If you are evaluating whether your current AI setup is working as a unified system, these are the signals to track:

- What percentage of VA-to-human transfers result in the patient repeating information they already gave the bot?

- How many bot interactions are reviewed by QA each month compared to human agent calls?

- When the VA makes an error, what is the feedback loop back to training?

- How long does it take for a human-agent resolution pattern to show up in VA behavior?

If the answers to those questions are "we do not know" or "there is not one," you are running two separate systems. And the cost of that fragmentation compounds every week.

Explore what a unified contact center approach looks like in healthcare →

This is part three of The Patient-First Contact Center, a four-part series for healthcare contact center leaders navigating AI.

Part 1: What Healthcare Contact Centers Get Wrong About Staffing (And What It's Costing Them)

Part 2: The Risks of AI in Healthcare (And What Purpose-Built AI Actually Looks Like)

Part 4: Why AI Deployment in Healthcare Takes 6-12 Months and How to Fix It

FAQs

Q: What is a warm handoff in a healthcare contact center? A warm handoff is when a virtual agent transfers a call to a human agent and passes along all relevant context collected during the interaction: a call summary, the patient's identity verification status, their sentiment score, and what the VA already attempted to resolve. The patient does not have to repeat themselves. In most healthcare contact center deployments today, this context does not travel with the call, forcing patients to re-verify their identity and re-explain their reason for calling every time they are transferred.

Q: Why should QA cover virtual agent interactions in healthcare? Most healthcare QA programs evaluate only human agent calls, leaving virtual agent performance entirely unmonitored. This creates a blind spot: the VA may be resolving calls incorrectly, communicating in ways that erode patient trust, or failing to follow clinical protocols, with no visibility into any of it. Applying the same quality rubrics to VA interactions as human interactions gives QA teams 100% coverage across the full contact center operation and surfaces where the VA needs improvement before those errors compound.

Q: How does a virtual agent learn from human agents? In a unified platform, QA insights from human agent calls feed directly into VA retraining. When a human agent resolves an edge case particularly well, that interaction is captured, analyzed, and used as a training signal to update VA behavior. In a fragmented system, this learning loop does not exist: human intelligence stays in the human operation and the VA continues failing the same edge cases indefinitely.

Q: What is the cost of fragmented AI in a healthcare contact center? Fragmented AI creates three compounding costs. First, the context gap at handoff forces patients to repeat themselves, eroding the patient experience and partially negating the efficiency gains of the VA. Second, the quality gap means bot performance goes unmonitored while human agents are evaluated, creating inconsistent standards across the operation. Third, the intelligence gap means the VA cannot improve from human agent expertise, so performance plateaus rather than compounds over time.

Q: What is unified contact center AI in healthcare? Unified contact center AI connects virtual agents and human agents in a single platform with shared quality standards, shared context on every handoff, and a continuous improvement loop where each side learns from the other. Rather than deploying a VA as a standalone bot and a separate QA system for human agents, a unified approach treats the entire patient interaction, virtual and human, as one continuous operation with one set of performance standards and one intelligence layer improving both over time.

Q: How do you evaluate whether a healthcare virtual agent deployment is working? The most revealing signals are operational: what percentage of VA-to-human transfers result in patients repeating information already collected by the bot; how many VA interactions are reviewed by QA each month compared to human agent calls; what the feedback loop is from VA errors back to training; and how long it takes for a human-agent resolution pattern to show up in VA behavior. If the answers to these questions are unknown or absent, the VA and human operations are running as separate systems, regardless of what the vendor contract says.

Keep reading

View all